.

We're excited to introduce a firmware update for the S100 RTK receiver, enhancing its stand-alone accuracy.

This improvement can greatly benefit customers using the S100 in locations where RTK correction services are unavailable and where sub-meter CEP accuracy is required.

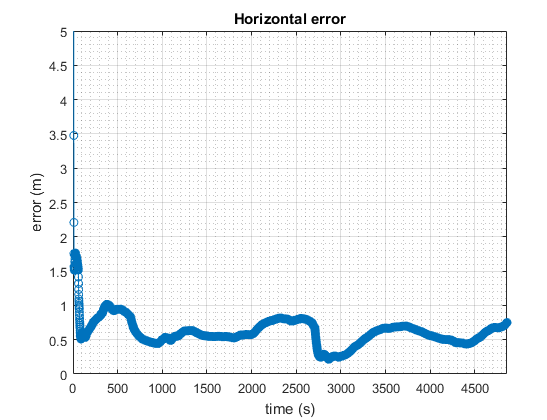

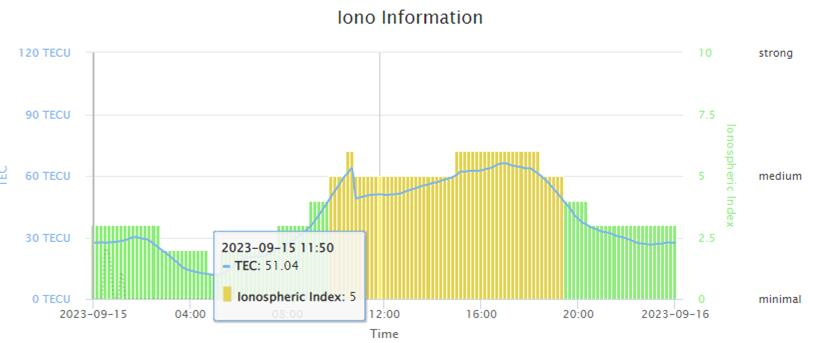

Below is sub-meter accuracy test result around time when there is higher ionospheric activity.

We're sorry. New comments are no longer being accepted.

4 people will be notified when a comment is added.

Would you like to be notified when a comment is added?

Please login or register first.